Targeted attacks take many forms, though there is one common tactic most of them share: Exploitation. To achieve their goal, they need to penetrate different systems on-the-go. The way this is done is by exploiting unpatched or unknown vulnerabilities. More common forms of exploitation happen via a malicious document that exploits vulnerabilities in Adobe Reader or a malicious URL that exploits the browser in order to set a foothold inside the end-point computer. Zero-Day is the buzzword today in the security industry, and everyone uses it without necessarily understanding what it really means. It indeed hides a complex world of software architectures, vulnerabilities, and exploits that only a few thoroughly understand. Someone asked me to explain?the topic, again, and when I really delved deep into the explanation I was able to comprehend something quite?surprising. Please bear with me, this is going to be a long post 🙂

Overview

I will begin?with some definitions of the different terms in the area: These are my own personal interpretations of them?they are not taken from Wikipedia.

Vulnerabilities

This term usually refers to problems in software products ? bugs, bad programming style, or logical problems in the implementation of the software. Software is not perfect and maybe someone can argue that it can?t be such. Furthermore, the people who build the software are even less perfect?so it is safe to assume such problems will always exist in software products. Vulnerabilities exist in operating systems, runtime environments such as Java, and .Net or specific applications whether they are written in high-level languages or native code. Vulnerabilities also exist in hardware products, but for the sake of this post, I will focus on software as the topic is broad enough even with this focus. One of the main contributors to the existence and growth in the number of vulnerabilities is attributed to the ever-growing pace of complexity in software products?it just increases the odds of creating new bugs that are difficult to spot due to the complexity. Vulnerabilities always relate to a specific version of a software product which is basically a static snapshot of the code used to build the product at a specific point in time. Time plays a major role in the business of vulnerabilities, maybe the most important one.

Assuming vulnerabilities exist in all software products, we can categorize them into three groups based on the level of awareness to these vulnerabilities:

- Unknown Vulnerability – A vulnerability that exists in a specific piece of software to which no one is aware. There is no proof that such exists but experience teaches us that it does and is just waiting to be discovered.

- Zero-Day – A vulnerability that has been discovered by a certain group of people or a single person where the vendor of the software is not aware of it and so it is left open without a fix or awareness to it its presence.

- Known Vulnerabilities – Vulnerabilities that have been brought to the awareness of the vendor and of customers either in private or as public knowledge. Such vulnerabilities are usually identified by a CVE number?? where during the first period following discovery the vendor works on a fix, or a patch, which will become available to customers. Until customers update the software with the fix, the vulnerability is kept open for attacks. So in this category, each respective installation of the software can have patched or unpatched known vulnerabilities. In a way, the patch always comes with a new software version, so a specific product version always contains unpatched vulnerabilities or not ? there is no such thing as a patched vulnerability ? there are only new versions with fixes.

There are other ways to categorize vulnerabilities: based on the exploitation technique such as buffer overflow or heap spraying, the type of bug which leads to vulnerability, or such as a logical flaw in design or wrong implementation which leads to the problem.

Exploits

A piece of code that abuses a specific vulnerability in order to cause something unexpected to occur as initiated by the attacked software. This means either gaining control of the execution path inside the running software so the exploit can run its own code or just achieving a side effect such as crashing the software or causing it to do something which is unintended by its original design. Exploits are usually highly associated with malicious intentions although from a technical point of view it is just a mechanism to interact with a specific piece of software via an open vulnerability ? I once heard someone refer to it as an ?undocumented API? :).

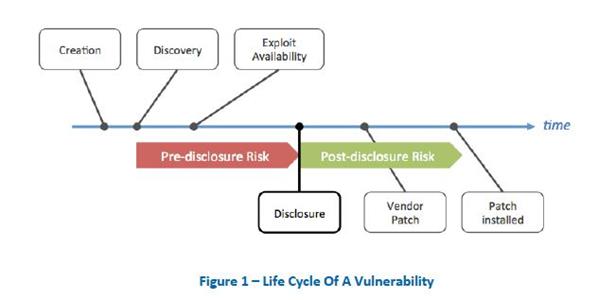

This picture from?Infosec Institute?describes a vulnerability/exploits life cycle in an illustrative manner:

The time span, colored in red, presents the time where a found vulnerability is considered a Zero Day and the time colored in green turns the state of the vulnerability to un-patched. The post-disclosure risk is always dramatically higher as the vulnerability becomes public knowledge. Also, the bad guys can and do exploit in higher frequency than in the earlier stage. Closing the gap in the patching period is the only step that can be taken toward reducing this risk.

The Math Behind a Targeted Attacks

Most targeted attacks today use the exploitation of vulnerabilities to achieve three goals:

- Penetrate an employee’s end-point computer by different techniques such as malicious documents sent by email or malicious URLs. Those malicious documents/URLs contain malicious code that seeks specific vulnerabilities in the host programs such as the browser or the document reader. And, during a rather na?ve reading experience, the malicious code is able to sneak into the host program as a penetration point.

- Gain higher privilege?once a malicious code already resides on a computer. Many times the attacks which were able to sneak into the host application don?t have enough privilege to continue their attack on the organization and that malicious code exploits vulnerabilities in the runtime environment of the application which can be the operating system or the JVM for example, vulnerabilities which can help the malicious code gain elevated privileges.

- Lateral movement – once the attack enters the organization and wants to reach other areas in the network to achieve its goals, many times it exploits vulnerabilities in other systems that reside on its path.

So, from the point of view of the attack itself, we can definitely identify three main stages:

- An attack at Transit Pre-Breach – This state means an attack is moving around on its way to the target and in the target prior to the exploitation of the vulnerability.

- An attack at Penetration -?This state means an attack is exploiting a vulnerability successfully to get inside.

- An attack at Transit Post Breach -??This state means an attack has started running inside its target and within the organization.

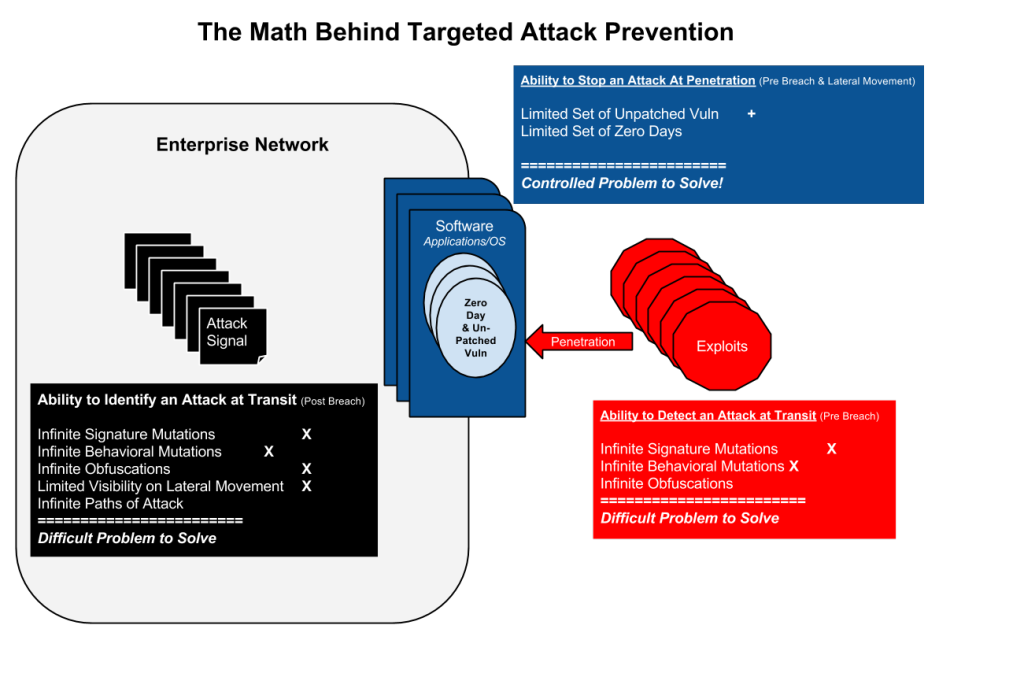

The following diagram quantifies the complexity inherent in each attack stage both from the attacker and defender sides and below the diagram there are descriptions for each area and the concluding part:

Ability to Detect an Attack at Transit Pre-Breach

Those are the red areas in the diagram. Here an attack is on its way prior to exploitation, on its way referring to the enterprise that can scan the binary artifacts of the attack, either in the form of network packets, a visited website, or specific document which is traveling via email servers or arriving at the target computer for example. This approach is called static scanning. The enterprise can also emulate the expected behavior with the artifact (opening a?document in a sandboxed environment) in a controlled environment and try to identify patterns in the behavior of the sandbox environment which resemble a known attack pattern ? this is called behavioral scanning.

Attacks pose three challenges towards security systems at this stage:

- Infinite Signature Mutations – Static scanners are looking for specific binary patterns in a file that should match to a malicious code sample in their database. Attackers are already much outsmarted these tools where they have automation tools for changing those signatures in a random manner with the ability to create an infinite number of static mutations. So a single attack can have an infinite amount of forms in its packaging.

- Infinite?Behavioural?Mutations?-?The evolution in the security industry from static scanners was towards behavioral scanners where the ?signature? of a behavior eliminates the problems induced by static mutations and the sample base of behaviors is dramatically lower in size. A single behavior can be decorated with many static mutations and behavioral scanners reduce this noise. The challenges posed by the attackers make behavioral mutations of infinite nature as well and they are of two-fold:

- An infinite number of mutations in behavior – In the same way, attackers outsmart the static scanners by creating an infinite amount of static decorations on the attack, here as well, the attackers can create either dummy steps or reshuffle the attack steps which eventually produce the same result but from a behavioral pattern point of view, it presents a different behavior. The spectrum of behavioral mutations seemed at first narrower than static mutations but with the advancement of attack generators, even that has been achieved.

- Sandbox evasion – Attacks that are scanned for bad behavior in a sandboxed environment have developed advanced capabilities to detect whether they are running in an artificial environment and if they detect so then they pretend to be benign which implies no exploitation. This is currently an ongoing race between behavioral scanners and attackers and attackers seem to have the upper hand in the game.

- Infinite Obfuscation – This technique has been adopted by attackers in a way that connects to the infinite static mutations factor but requires specific attention. Attackers, in order to deceive the static scanners, have created a technique that hides the malicious code itself by running some transformation on it such as encryption and having a small piece of code that is responsible for decrypting it on target prior to exploitations. Again, the range of options for obfuscating code is infinite which makes the static scanners’ work more difficult.

This makes the challenge of capturing an attack prior to penetration very difficult to impossible where it definitely increases with time. I am not by any means implying such security measures don?t serve an important role where today they are the main safeguards?from?turning the enterprise into?a zoo. I am just saying it is a very difficult problem to solve and that there are other areas in terms of ROI (if such security as ROI exists) which a CISO better invest in.

Ability to Stop an Attack at Transit Post Breach

Those are the black areas in the diagram. An attack that has already gained access to the network can take an infinite number of possible attack paths to achieve its goals. Once an attack is inside the network then the relevant security products try to identify it. Such technologies surround big data/analytics which tries to identify activities in the network which imply malicious activity or again network monitors that listen to the traffic and try to identify artifacts or static behavioral patterns of an attack. Those tools rely on different informational signals which serve as attack signals.

Attacks pose multiple?challenges towards security products at this stage:

- Infinite Signature Mutations,?Infinite?Behavioural?Mutations,?Infinite Obfuscation?-?these are the same challenges as described before since the attack within the network can have the same characteristics as the ones before entering the network.

- Limited Visibility on Lateral Movement – Once an attack is inside then usually its next steps are to get a stronghold in different areas in the network and such movement is hardly visible as it is eventually about legitimate actions ? once an attacker gets a higher privilege it conducts actions which are considered legitimate but of high privilege and it is very difficult for a machine to deduce the good vs. the bad ones. Add on top of that, the fact that persistent attacks usually use technologies that enable them to remain stealthy and invisible.

- Infinite Attack Paths -?The path an attack can take inside the network? especially taking into consideration a targeted attack is something which is unknown to the enterprise and its goals, has infinite options for it.

This makes the ability to deduce that there is an attack, its boundaries, and goals from specific signals coming from different sensors in the network very limited. Sensors deployed on the network never provide true visibility into what?s really happening in the network so the picture is always partial. Add to that deception techniques about the path of attack and you stumble into a very difficult problem. Again, I am not arguing that all security analytics products which focus on post-breach are not important, on the contrary, they are very important. Just saying it is just the beginning of a very long path towards real effectiveness in that area. Machine learning is already playing a serious role and AI will definitely be an ingredient in a future solution.

Ability to Stop an Attack at Penetration Pre-Breach and on Lateral Movement

Those are the dark blue areas in the diagram. Here the challenge is reversed towards the attacker where there is a limited amount of entry points into the system. Entry points a.k.a vulnerabilities. Those are:

- Unpatched Vulnerabilities ??These are open ?windows? which have not been covered yet. The main challenge here for the IT industry is about automation, dynamic updating capabilities, and prioritization. It is definitely an open gap that can be narrowed down potentially to become insignificant.

- Zero Days ? This is an unsolved problem. There are many approaches to that such as ASLR and DEP on Windows but still, there is no bulletproof solution for it. In the startups’ scene, I am aware that quite a few are working very hard on a solution. Attackers identified this soft belly a long time ago and it is the main weapon of choice for targeted attacks which can potentially yield serious gains for the attacker.

This area presents a definite problem but in a way it seems as the most probable one to be solved earlier than the other areas. Mainly because?the attacker in this stage is at its greatest disadvantage ? right before it gets into the network it can have infinite options to disguise itself and after it gets into the network the action paths which can be taken by it?are infinite. Here the attacker need to go through a specific window and there aren?t too many of those out there left unprotected.

Players in the Area of Penetration Prevention

There are multiple companies/startups which are brave enough to tackle the toughest challenge in the targeted attacks game – preventing infiltration – I call it, facing the enemy at the gate. In this ad-hoc list, I have included only technologies which aim to block attacks at real-time – there are many other startups which approach static or behavioral scanning in a unique and disruptive way such as Cylance?and CyberReason?or Bit9 + Carbon Black?(list from @RickHolland) which were?excluded for sake of brevity and focus.

Containment Solutions

Technologies that isolate the user applications with a virtualized environment. The philosophy behind it is that even if there was exploitation in the application still it won’t propagate to the computer environment and the attack will be contained. From an engineering point of view, I think these guys have the most challenging task as the balance between isolation and usability has an inverse correlation in productivity and it all involves virtualization on an end-point which is a difficult task on its own. Leading players are Bromium?and Invincea, well-established startups with very good traction in the market.

Exploitation Detection & Prevention

Technologies which aim to detect and prevent the actual act of exploitation. Starting from companies like Cyvera (now Palo Alto Networks Traps product line) which aim to identify patterns of exploitations, technologies such as ASLR/DEP and EMET?which aim?at breaking the assumptions of exploits by modifying the inner structures of programs and setting traps at “hot” places which are susceptible to attacks, up to startups like Morphisec?which employs a unique moving target concept to deceive and capture the attacks at real-time. Another long time player and maybe the most veteran in the anti-exploitation field is?MalwareBytes. They have a comprehensive offering for anti-exploitation with capabilities ranging from in-memory deception and trapping techniques up to real time sandboxing.

At the moment the endpoint market is still controlled by marketing money poured by the major players where their solutions are growing ineffective in an accelerating pace. I believe it is a transition period and you can already hear voices saying endpoint market needs a shakeup. In the?future the anchor of endpoint protection will be about?real time attack prevention and static and behavioral scanning extensions will play a minor feature completion role. So pay careful attention to the technologies mentioned above as one of them (or maybe a combination:) will bring the “force” back into balance:)

Advice for the CISO

Invest in closing the gap posed by vulnerabilities. Starting from patch automation, prioritized vulnerabilities scanning up to security code analysis for in-house applications?it is all worth it. Furthermore, seek out solutions that deal directly with the problem of zero-days, there are several startups in this area, and their contributions can have a much higher magnitude than any other security investment in a post or pre-breach phase.